Facebook Is Building An Instagram For Kids Under The Age Of 13

Executives at Instagram are planning to build a version of the popular photo-sharing app that can be used by children under the age of 13, according to an internal company post obtained by BuzzFeed News.

“I’m excited to announce that going forward, we have identified youth work as a priority for Instagram and have added it to our H1 priority list,” Vishal Shah, Instagram’s vice president of product, wrote on an employee message board on Thursday. “We will be building a new youth pillar within the Community Product Group to focus on two things: (a) accelerating our integrity and privacy work to ensure the safest possible experience for teens and (b) building a version of Instagram that allows people under the age of 13 to safely use Instagram for the first time.”

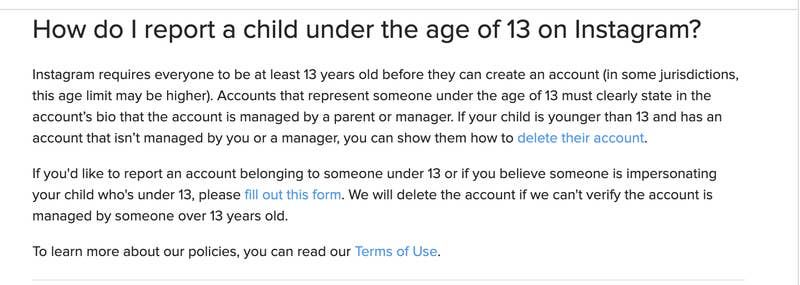

Current Instagram policy forbids children under the age of 13 from using the service.

According to the post, the work would be overseen by Adam Mosseri, the head of Instagram, and led by Pavni Diwanji, a vice president who joined parent company Facebook in December. Previously, Diwanji worked at Google, where she oversaw the search giant’s children-focused products, including YouTube Kids.

The internal announcement comes two days after Instagram said it needs to do more to protect its youngest users. Following coverage and public criticism of the abuse, bullying, or predation faced by teens on the app, the company published a blog post on Tuesday titled “Continuing to Make Instagram Safer for the Youngest Members of Our Community.”

That post makes no mention of Instagram’s intent to build a product for children under the age of 13, but states, “We require everyone to be at least 13 to use Instagram and have asked new users to provide their age when they sign up for an account for some time.”

The announcement lays the groundwork for how Facebook — whose family of products is used by 3.3 billion people every month — plans to expand its user base. While various laws limit how companies can build products for and target children, Instagram clearly sees kids under 13 as a viable growth segment, particularly because of the app’s popularity among teens.

In a short interview, Mosseri told BuzzFeed News that the company knows that “more and more kids” want to use apps like Instagram and that it was a challenge verifying their age, given most people don’t get identification documents until they are in their mid-to-late teens.

“We have to do a lot here,” he said, “but part of the solution is to create a version of Instagram for young people or kids where parents have transparency or control. It’s one of the things we’re exploring.”

Mosseri added that it was early in Instagram’s development of the product and that the company doesn’t yet have a “detailed plan.”

Priya Kumar, a PhD candidate at the University of Maryland who researches how social media affects families, said a version of Instagram for children is a way for Facebook to hook in young people and normalize the idea “that social connections exist to be monetized.”

“From a privacy perspective, you're just legitimizing children’s interactions being monetized in the same way that all of the adults using these platforms are,” she said.

Kumar said children who use YouTube Kids often migrate to the main YouTube platform, which is a boon for the company and concerning for parents.

“A lot of children, either by choice or by accident, migrate onto the broader YouTube platform,” she said. “Just because you have a platform for kids, it doesn’t mean the kids are going to stay there.”

The development of an Instagram product for kids follows the 2017 launch of Messenger Kids, a Facebook product aimed at children between the ages of 6 and 12. After the product’s launch, a group of more than 95 advocates for children’s health sent a letter to Facebook CEO Mark Zuckerberg, calling for him to discontinue the product and citing research that “excessive use of digital devices and social media is harmful to children and teens, making it very likely this new app will undermine children’s healthy development.”

Facebook said it had consulted an array of experts in developing Messenger Kids. Wired later revealed that the company had a financial relationship with most of the people and organizations that had advised on the product.

In 2019, the Verge reported that a bug in Messenger Kids allowed children to join groups with strangers, despite Facebook’s claims that the product had strict privacy controls.

The error meant that “thousands of children were left in chats with unauthorized users, a violation of the core promise of Messenger Kids,” according to the Verge.

Facebook said the bug had only affected a “small number of group chats.”

Instagram users already face issues with bullying and harassment. A 2017 survey by Ditch the Label, an anti-bullying nonprofit, found that 42% of people between the ages of 12 and 20 had experienced cyberbullying on Instagram, the highest percentage of any platform measured. Roughly two years later, Instagram announced features aimed at combating bullying.

“Teenagers have always been cruel to one another. But Instagram provides a uniquely powerful set of tools to do so,” reported the Atlantic.

"What we aspire to do — and this will take years, I want to be clear — is to lead the fight against online bullying," Mosseri said at a Facebook event in 2019.

That year, the National Society for Prevention of Cruelty to Children in the UK reported that it had found a “200% rise in recorded instances in the use of Instagram to target and abuse children." The targeting and grooming of young children by older men on Instagram was the also focus of a story published on Medium titled “I’m a 37-Year-Old Mom & I Spent Seven Days Online as an 11-Year-Old Girl.”

The moves Instagram announced earlier this week are intended to curb such abuse. The company said it would limit messages between teens and adults they don’t follow and “make it more difficult” for adults to find and follow teens.

“This may include things like restricting these adults from seeing teen accounts in 'Suggested Users', preventing them from discovering teen content in Reels or Explore, and automatically hiding their comments on public posts by teens,” the company’s post reads.

While Instagram is trying to make itself safe for teens, it’s unclear how its executives believe it can make its platform safe to children under the age of 13. Instagram head Mosseri, who has previously faced safety issues at home, covers the faces of his young children with emojis when posting images of them to his public account.

“Interesting you blurred your kids faces while millions of moms/dads are posting their kids faces on your platform,” one follower wrote on a photo Mosseri posted on Halloween last year. “What do you know that they don’t about how these images are used?

Mosseri told BuzzFeed News that because he was a public figure, security concerns had led him to hide his children’s faces in those images. However, he still maintains private Instagram accounts for each of his own children to share their upbringings with his family members and friends around the world.

“I think sharing sensitive information is important to be careful about,” he said.