Views on TikTok hashtags hosting eating disorder content continue to climb, research says

TikTok videos using hashtags previously identified as hosting eating-disorder content are continuing to attract views, new research by the Centre for Countering Digital Hate has found.

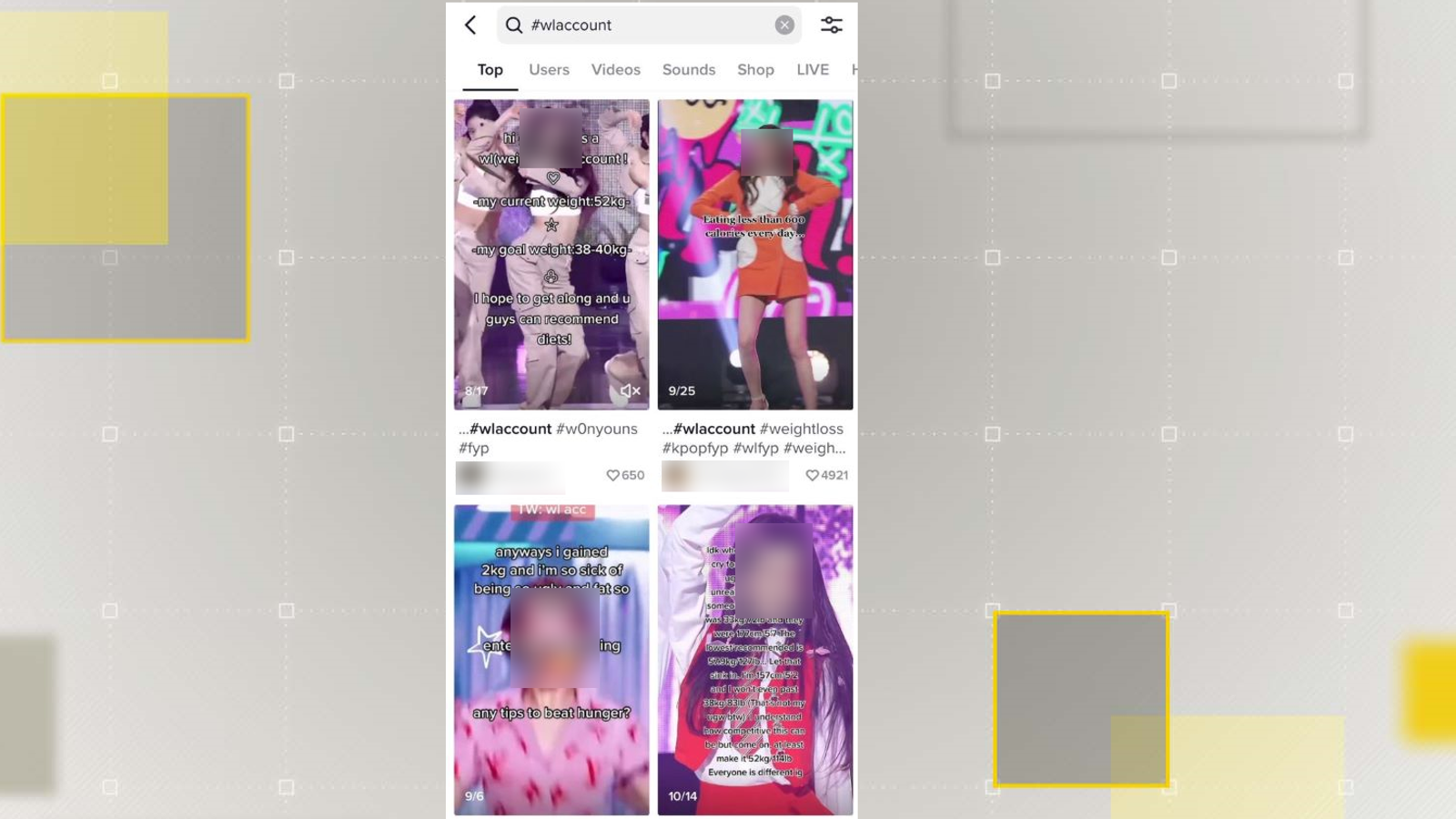

A December report by the campaign group identified "coded" hashtags where users could access potentially harmful videos promoting restrictive diets and so-called "thinspo" content, designed to encourage harmful weight loss.

New analysis of those hashtags by the organisation found that since the study, just seven had been removed from the platform and only three carried a health warning on the UK version of the app.

But TikTok said it had removed content which violates its rules, which do not allow the promotion or glorification of eating disorders.

The Centre for Countering Digital Hate (CCDH) said the hashtags it found still on the platform had amassed 1.6 billion more views, which the UK's leading eating disorder charity Beat has called "extremely concerning".

"There is no excuse for harmful hashtags and videos being on TikTok in the first place," Andrew Radford, Beat's Chief Executive said.

"The company should immediately identify and remove damaging content as soon as it is uploaded," he told Sky News.

Content warning: this article contains references to eating disorders.

But users will often make subtle edits to terminology so they can continue posting potentially harmful material about eating disorders without being spotted by TikTok's moderators.

'Coded' language to avoid detection

In its December report, the CCDH identified 56 TikTok hashtags using "coded" language, under which it found potentially harmful eating disorder content.

The CCDH also found 35 of the hashtags contained a high concentration of pro-eating disorder videos, while it said 21 contained a mix of harmful content and healthy discussion.

Among the material found in both categories were videos promoting unhealthy weight loss, restrictive diets and "thinspo".

In November, the views across these hashtags stood at 13.2 billion. When CCDH reviewed them in January, it found that the number of views on videos using the hashtags had grown to more than 14.8 billion.

Since the original study, CCDH says seven of the hashtags it identified had been removed from the platform altogether.

Four of those hosted predominantly pro-eating disorder content, while three contained both positive and harmful videos.

In the review, the CCDH found when accessed by US users, 37 of the hashtags they identified carried a safety warning directing users to the US's leading eating disorder charity.

However, the same review found that for UK users, just three of those hashtags carry the same kind of warning.

Centre for Countering

Digital Hate found 56 hashtags associated with eating disorder content.

35 of those contained a high concentration of pro-eating disorder

content.

Centre for Countering

Digital Hate found 56 hashtags associated with eating disorder content.

35 of those contained a high concentration of pro-eating disorder

content.'Outcry' by parents

"TikTok is clearly capable of adding warnings to English language content that might harm but is choosing not to implement this for English language content in the UK," said Imran Ahmed, CEO of the Centre for Countering Digital Hate.

"There can be no clearer example of the way the enforcement of purportedly universal rules of these platforms are actually implemented partially, selectively, and only when platforms feel under real pressure by governments," he told Sky News.

The new research also indicates that most of the people accessing material under these hashtags are young.

Using TikTok's own data analytics tool, CCDH found that 91% of views on 21 of the hashtags came from users under the age of 24. This tool, however, is limited as TikTok does not include data for any users under the age of 18.

"Despite an outcry from parents, politicians and the general public, three months later this content continues to grow and spread unchecked," Mr Ahmed added.

"Every view represents a potential victim - someone whose mental health might be harmed by negative body image content, someone who might start restricting their diet to dangerously low levels," he said.

Following CCDH's findings, a group of charities - including the NSPCC, the Molly Russell Foundation and the US and UK arms of the American Psychological Foundation - have called on TikTok to improve its moderation policies in a letter to its head of safety, Eric Han.

Responding to the findings, a spokesperson for TikTok said: "Our community guidelines are clear that we do not allow the promotion, normalisation or glorification of eating disorders, and we have removed content mentioned in this report that violates these rules.

"We are open to feedback and scrutiny, and we seek to engage constructively with partners who have expertise on these complex issues, as we do with NGOs in the US and UK."